Sharding the Clusters across Argo CD Application Controller Replicas

Argo CD is an open-source GitOps continuous delivery tool, which helps to automate the deployment of applications to Kubernetes clusters. With growing GitOps and Kubernetes adoption, Argo CD has emerged as one of the most popular choices in the GitOps ecosystem. This is one of the blog posts, where we dwell into different Argo CD related issues that we observed as part of our Argo CD enterprise support offering to our various customers.

In this blog post, we will be diving deep into a specific problem that may occur with your Argo CD setup in case you’re using it to manage multiple clusters. But before we jump into the specific problem statement, let’s quickly examine what Argo CD comprises internally.

Components of Argo CD

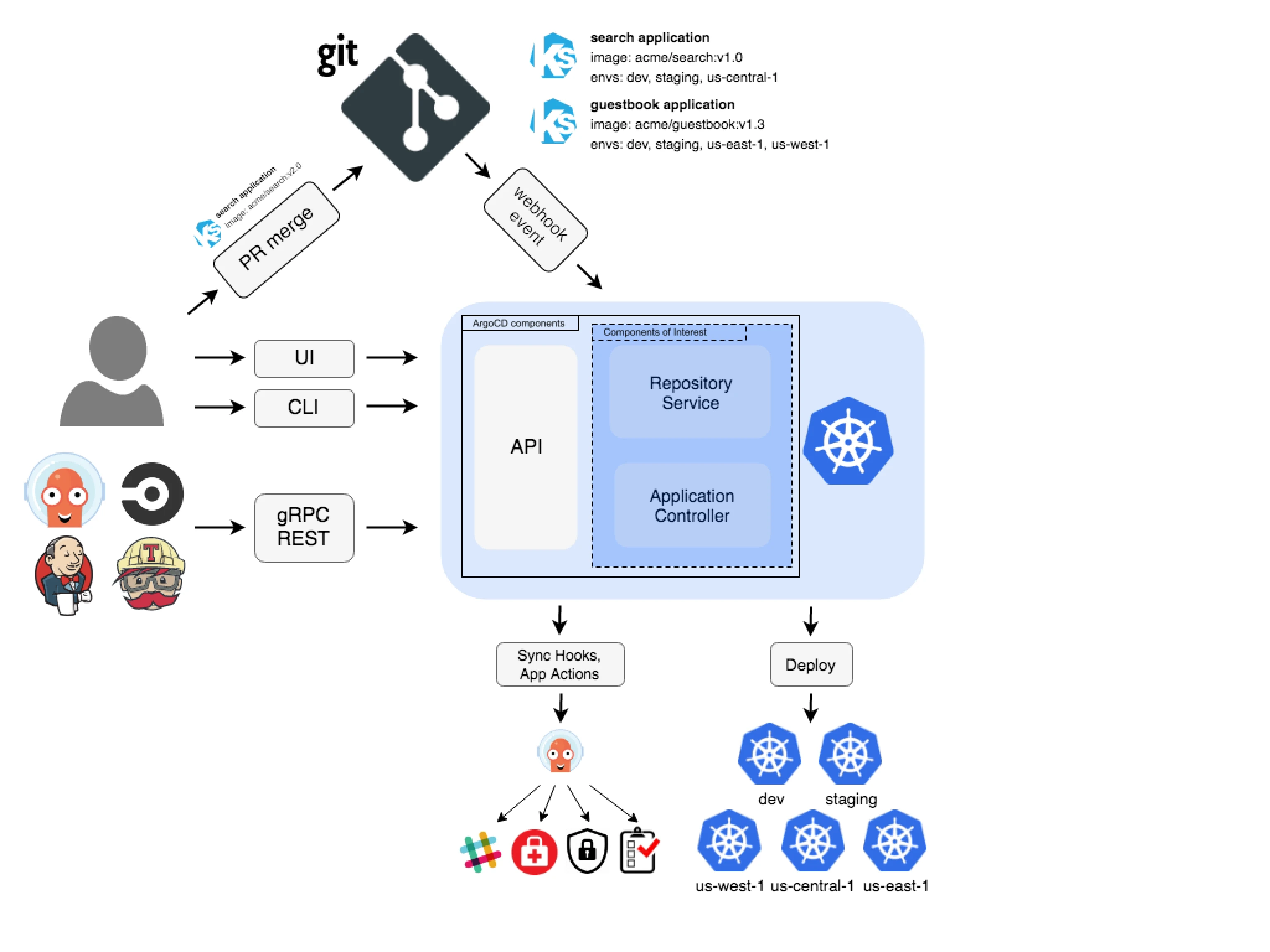

Argo CD comprises various components and each one has its own set of actions. You can see how the typical Argo CD’s architecture looks in the following diagram:

(Image Source: Argo CD Architecture)

There are primarily three components of Argo CD, as visible in the above diagram:

- API

- Repository Service (also known as

Repo Server) - Application Controller

Out of the above three, the components of interest for this particular blog post are Repository Service and Application Controller.

Repository Service (aka Repo Server)

The Repo server maintains the connection to the Git repositories where application manifests are stored. It listens to changes in the Git repositories and caches the latest changes. It is also responsible for generating Kubernetes manifests from the given application specification.

Application Controller

Application Controller compares the live state (what is running in the cluster) and desired/target state (what is in the repo). If there is any difference between the live and desired state, it can optionally synchronize the live state to the desired/target state, which involves deploying, updating, or removing resources as necessary.

There are many cases when you might want to consider scaling the Argo CD Application Controller, such as:

- High number of applications and resources

- Complex application dependencies

- Frequent updates

- Large cluster size

- Network latency, or connectivity issues between the Argo CD Application Controller and managed clusters

- High availability requirements

When an Argo CD Application Controller statefulset is scaled, from a user’s perspective it is expected that the Kubernetes clusters will be sharded across the replicas of the Application Controller uniformly. However, that is not the case. Let’s look into this in detail in the next section of the post.

Problem Statement

Once we had a situation where one of our customers ran into the problem of the non-uniform sharding process of the Argo CD Application Controller. The customer brought forward a problem where they were facing a slow synchronization issue despite having multiple replicas of the Argo CD Application Controller running for their clusters.

First-hand, it seemed that the Application Controller was handling too many clusters earlier and was using too many resources. Hence, the customer went ahead and scaled up the Argo CD Application Controller statefulset. By doing so, it was expected that each replica of the Application Controller would focus on a subset of clusters, thus distributing the workload and memory usage. This process is known as sharding. Even Argo CD’s official documentation suggests to leverage sharding. However, the sharding mechanism of the Argo CD Application Controller does not provide much help.

When our Argo CD support engineers started looking deep into the problem, they found that some of the Argo CD Application Controller replicas were managing more clusters in comparison to other replicas and a couple of replicas were managing no clusters at all – which implies that increasing the number of replicas does not necessarily mean that your clusters will be sharded uniformly across the available replicas.

To find how the clusters are sharded, you can use the argocd command line utility. If it is not available, you can install it by following the Argo CD CLI installation steps. Once installed and connected to the Argo CD server, you can run the following command:

argocd admin cluster stats

This command will show the shard allocated to each of the clusters managed by the connected Argo CD instance. Following is a snippet of the output of the above command:

Note: The below snippet is not a complete snippet and its whole purpose is to understand how to infer the output of argocd admin cluster stats command.

SERVER SHARD CONNECTION NAMESPACES COUNT APPS COUNT RESOURCES COUNT

https://kubernetes.default.svc 0 4 65 217

<redacted> 4 4 65 217

<redacted> 4 5 73 228

<redacted> 3 4 65 217

<redacted> 0 4 65 217

<redacted> 1 5 73 228

<redacted> 3 4 65 217

<redacted> 4 4 65 217

<redacted> 4 5 73 228

In the above snippet, first column contains the server address of the particular Kubernetes cluster, and the second column contains the index of the Argo CD Application Controller replica that is incharge of maintaining the live state of the respective cluster. E.g. First cluster with Server address as https://kubernetes.default.svc is being maintained by Argo CD Application Controller’s replica with index 0, or in other words, it is argocd-application-controller-0. Please note that all the replicas of Argo CD Application Controller have the index number as a suffix. So, it means that shard 0 means argocd-application-controller-0, shard 1 means argocd-application-controller-1 and so on.

If you look at the above snippet, you can see that four of the clusters are being handled by the argocd-application-controller-4 pod. argocd-application-controller-0 and argocd-application-controller-3 handles two clusters each, and argocd-application-controller-1 handles 1 cluster only.

Troubleshooting

As the first step of troubleshooting, our professional support engineers decided to analyze the Argo CD Application Controller’s logs. When checking the logs for further troubleshooting, they found the following log multiple times in all the replicas of the Argo CD Application Controller:

Note: Time can be different for different logs, as the log message was the same, we did not add more logs here.

time="2023-07-21T11:27:12Z" level=info msg="Ignoring cluster <cluster-server-address>"

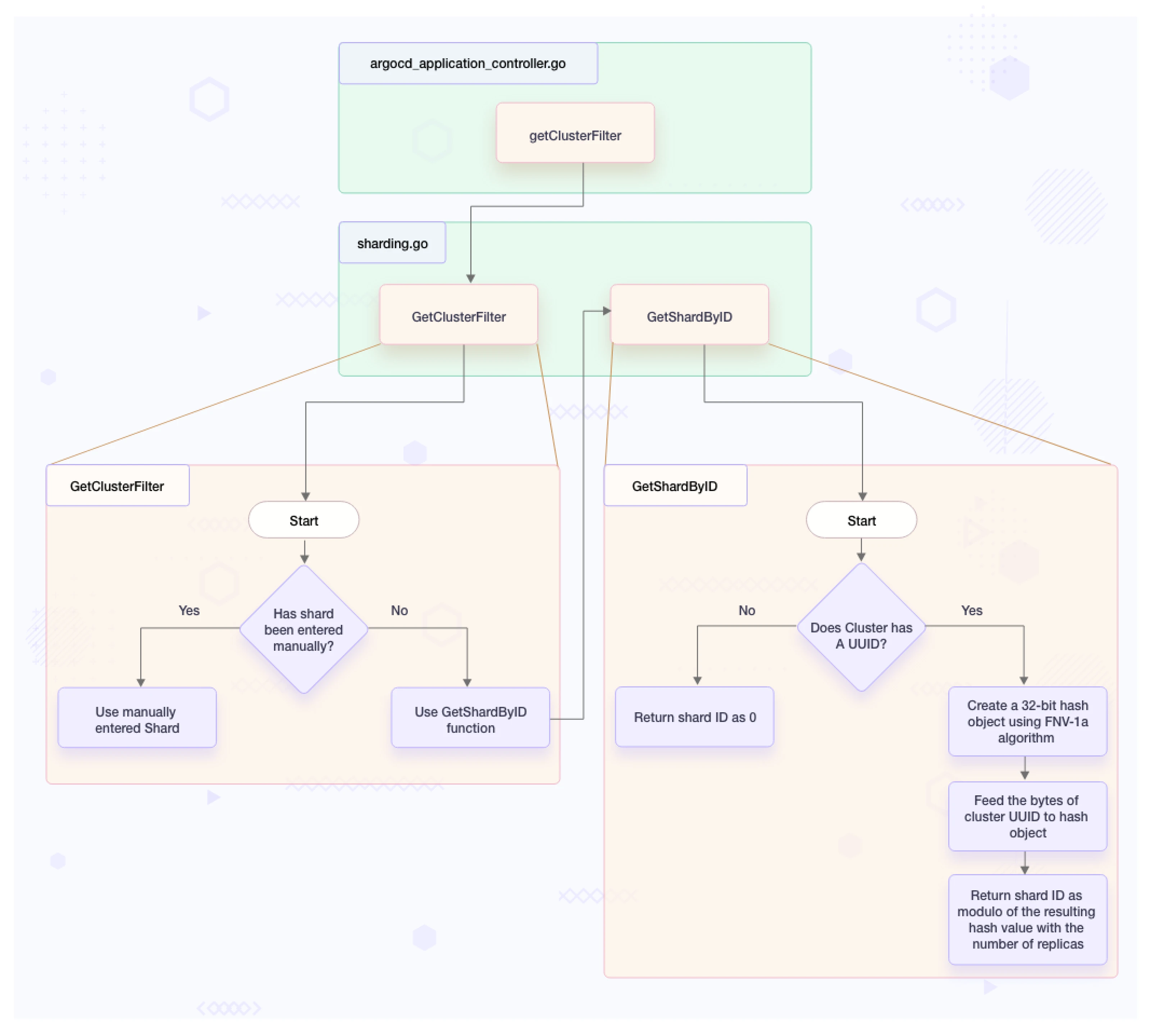

When our team dug deeper into the issue looking for the sharding logic, they found that the sharding function has been written in such a way that it assigns the particular replica of the Argo CD Application Controller to manage a cluster, based on the UUID of the secret storing the cluster (considering we are not manually interfering with the sharding process).

Logic behind Sharding in Argo CD Application Controller

The following flow diagram depicts how the sharding logic works internally in the Argo CD codebase.

Note: The diagram depicts the sharding logic of Argo CD version < 2.8.0. With the release of Argo CD 2.8.0, this sharding logic is now known as the legacy sharding algorithm.

(Argo CD Application Controller Sharding Logic)

Solution for uniform cluster sharding across Argo CD Application Controller replicas

In the present day, there are two ways to handle such a scenario:

A. Using round-robin algorithm

B. Manually defining the shard

In our case, our team went ahead with Solution B, as that was the only solution present when the issue occurred. However, with the release of Argo CD 2.8.0 (released on August 7, 2023), things have changed - for the better :). Now, there are two ways to handle the sharding issue with the Argo CD Application Controller:

Solution A: Use the Round-Robin sharding algorithm (available only for Argo CD 2.8.0 and later releases)

An issue was raised on GitHub for the sharding algorithm of Argo CD Application Controller and that issue has been fixed in Argo CD 2.8.0 by pull request 13018.

It means that users can upgrade to 2.8.0 or any later version and configure the sharding algorithm to get rid of this issue. If you don’t want to (or can’t) upgrade to 2.8.0, you might want to go for Solution B.

However, it has to be noted that the new round-robin sharding algorithm is not the default sharding algorithm for the Argo CD Application Controller at the time of writing this blog post, it is still using the legacy sharding algorithm as the default one.

How to configure the Argo CD Application Controller to use a round-robin sharding algorithm?

For configuring the sharding algorithm in Argo CD 2.8.0 or later, we need to set controller.sharding.algorithm to round-robin in argocd-cmd-params-cm configmap. If you have installed Argo CD using manifest files, connect to the cluster on which Argo CD is running, update the namespace in the following command, and run the same:

kubectl patch configmap argocd-cmd-params-cm -n <argocd-namespace> --type merge -p '{"data":{"controller.sharding.algorithm":"round-robin"}}'

After updating the configmap successfully, roll out the restart of the Argo CD Application Controller statefulset using the following command:

kubectl rollout restart -n <argocd-namespace> statefulset argocd-application-controller

Now, to verify that the Argo CD Application Controller is using a round-robin sharding algorithm, run the following command:

kubectl exec -it argocd-application-controller-0 -- env | grep ARGOCD_CONTROLLER_SHARDING_ALGORITHM

The expected output should be:

ARGOCD_CONTROLLER_SHARDING_ALGORITHM=round-robin

In case you maintain Argo CD using Helm, then you can add controller.sharding.algorithm: "round-robin" key-value pair under .config.params in values file and install/upgrade the setup, to get the similar results.

In case you maintain Argo CD using Argo CD Operator, then you can add ARGOCD_CONTROLLER_SHARDING_ALGORITHM environment variable under controller in the ArgoCD resource specification and set its value to 'round-robin'. Make sure you have enabled the sharding for controller using Sharding.enabled flag under controller. Apply the configuration once the changes are done.

Solution B: Manually define the shard

This is a workaround in case the user doesn’t want to upgrade the running Argo CD instance or manually want to manage the sharding.

Define the shard for a new cluster

If you are adding a new cluster, mention the index of the application-controller replica which you require to manage the cluster, against the shard key, while defining the particular cluster secret. For example:

apiVersion: v1

kind: Secret

metadata:

name: <secret-name>

labels:

argocd.argoproj.io/secret-type: cluster

namespace: <secret-namespace>

type: Opaque

stringData:

name: <cluster-name>

server: <server-url>

config: <configuration>

shard: "<desired-application-controller-replica-index-here>"

The value of shard would be at the .stringData.shard location while entering the data. When you’ll check the secret again, you can find the base64 encoded value of the shard key at .data.shard in the secret. Please note that the value of shard should be in string format, not in int format. You might want to use quotes for that.

If you want to add the cluster imperatively, mention the index of the application-controller replica which you require to manage the cluster, against the --shard argument. For example:

argocd cluster add < context-here > \

--shard <desired-application-controller-replica-index-here>

Please note that you need to enter an int value if you are adding the cluster imperatively.

Update the shard for an existing cluster

In case you have an existing cluster for which you manually want to define the shard, then you will need to edit the particular cluster secret and add the following block:

stringData:

shard: "<desired-application-controller-replica-index-here>"

The value of the shard would be at the .stringData.shard location while entering the data. When you’ll check the secret again, you can find the base64 encoded value of the shard key at .data.shard in the secret. Please note that the value of shard should be in string format, not in int format. You might want to use quotes for that.

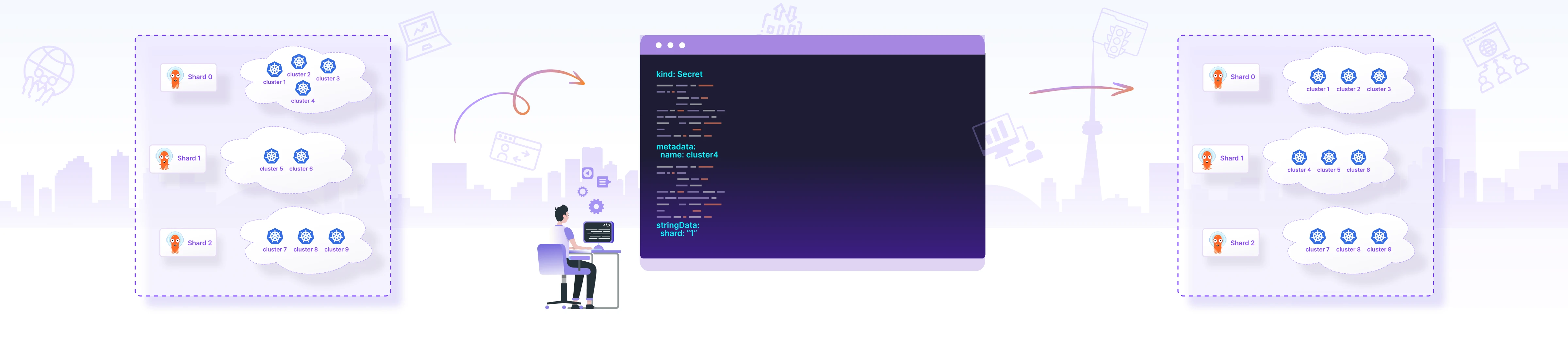

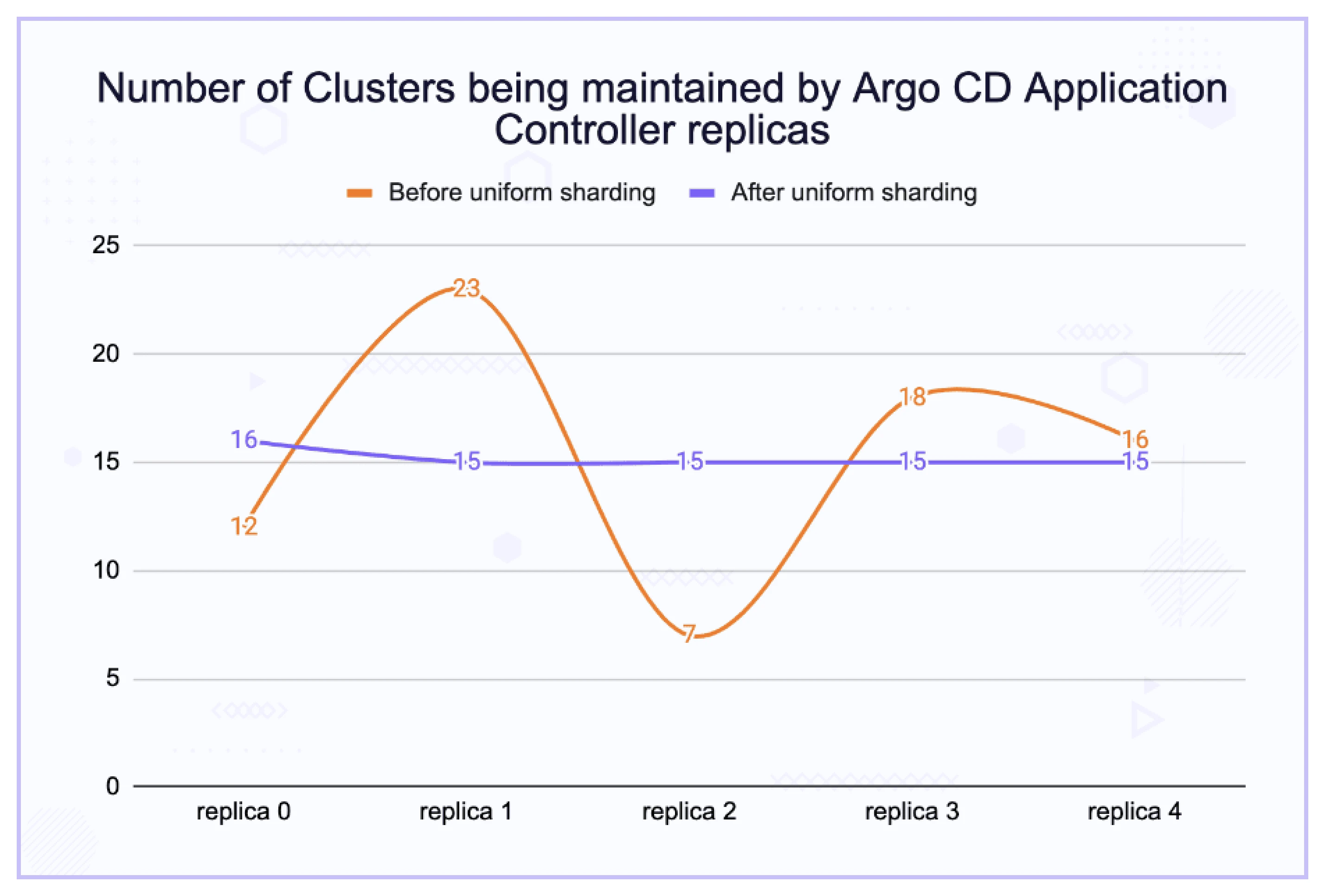

Once the sharding was done, the way different clusters were distributed evenly and efficiently managed by the Argo CD sharding process can be seen using the below graph:

(Argo CD Cluster Distribution)

Conclusion

So, we learned how to use different approaches to deal with the improper sharding mechanism of the Argo CD Application Controller. Though using the built-in solution (round-robin sharding algorithm) makes more sense generally, there are cases when you might want to leverage manual sharding. For example, if you have three clusters, where the first two clusters are running 400 applications each, but the third cluster is running 800 applications, it makes sense to share one shard between the first two clusters and dedicate one shard to the third cluster.

It is being discussed at the time of writing, that the round-robin sharding algorithm in Argo CD 2.8.0 is still having some problems with logging (it is generating too many logs), however, the change seems to be a step in the right direction and the issue is being worked upon right now. It should be fixed soon.

Note: Scaling the Application Controller should be done judiciously and should be aligned with the actual needs of your environment. Monitoring the performance and resource utilization of the Application Controller can help you make informed decisions about when and how to scale.

We at InfraCloud also help our customers with this kind of assessment and implement Argo CD to cater to their requirements well. If you are looking for help with GitOps adoption using Argo CD, do check our Argo CD consulting capabilities and expertise to know how we can help with your GitOps adoption journey. If you’re looking for managed on-demand Argo CD support, check our Argo CD support model.

I hope you found this post informative. For more posts like this one, subscribe to our weekly newsletter. I’d love to hear your thoughts on this post, so do start a conversation on LinkedIn :)

Additional Resources

References

Community Support

If you want to connect to the Argo CD community, please join CNCF Slack. You can join #argo-cd and many other channels too.

Stay updated with latest in AI and Cloud Native tech

We hate 😖 spam as much as you do! You're in a safe company.

Only delivering solid AI & cloud native content.